1- Project Overview

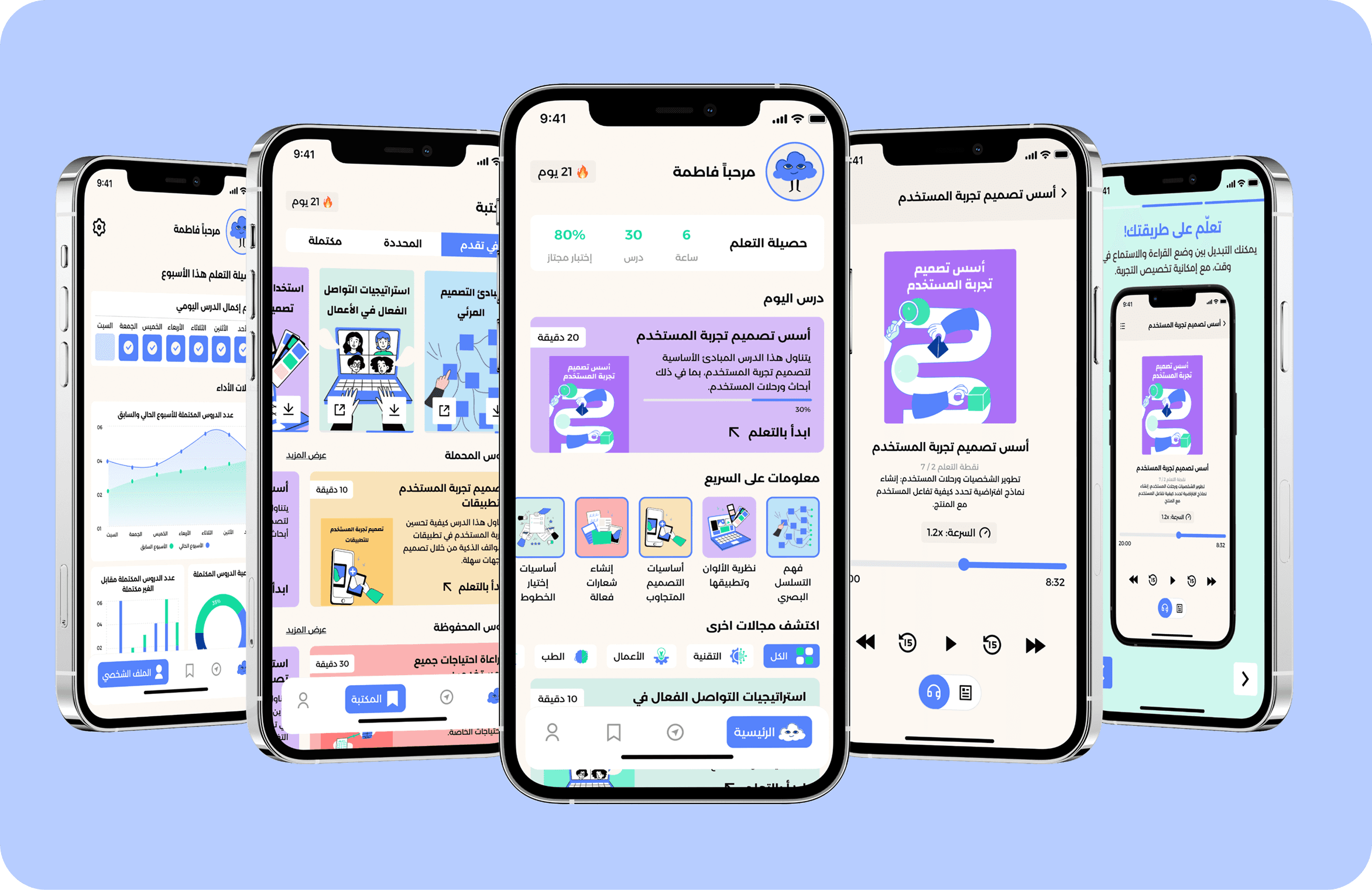

The Quran Journal is a responsive, bilingual web application designed to support reflective Quranic study. It enables users to track verses, themes, lessons, and personal reflections within a calm, distraction-free interface.

Key Product Characteristics:

Bilingual support (Arabic RTL and English LTR)

Fully responsive across desktop and mobile

Personal journaling with persistent local storage

Integration with Quran APIs for verse, translation, and tafsir

Light and Dark mode support

The application was built as a single-page React app using modern front-end practices, with inline styling, custom internationalization logic, and deployment-ready architecture.

What makes this project unique is not just the product—but the process used to systematize it.

2- Process

Reverse Engineering: From Code → Design

1. AI-Orchestrated Development

Used ChatGPT to generate structured prompts for Claude to build the website.

Claude generated the initial React-based application.

Continued refining and fixing the code using Claude and DeepSeek.

This phase prioritized rapid iteration, responsiveness, and accurate RTL/LTR handling.

2. Preparing Claude for Figma

Before attempting to generate or interpret designs in Figma:

I uploaded a Figma skills reference file (from Figma documentation) to Claude.

This enabled Claude to better understand Figma structure, nodes, variables, and components.

This improved reasoning quality, though execution still required iteration and validation.

3. Early Attempt: AI → Figma Variables

Initially, I used Claude to:

Generate Figma local variables (colors, typography, tokens).

Attempt mapping them to design structures.

Challenges:

Weak connection between variables and actual design nodes.

Poor handling of text styles.

Inability to convert code into accurate visual layouts.

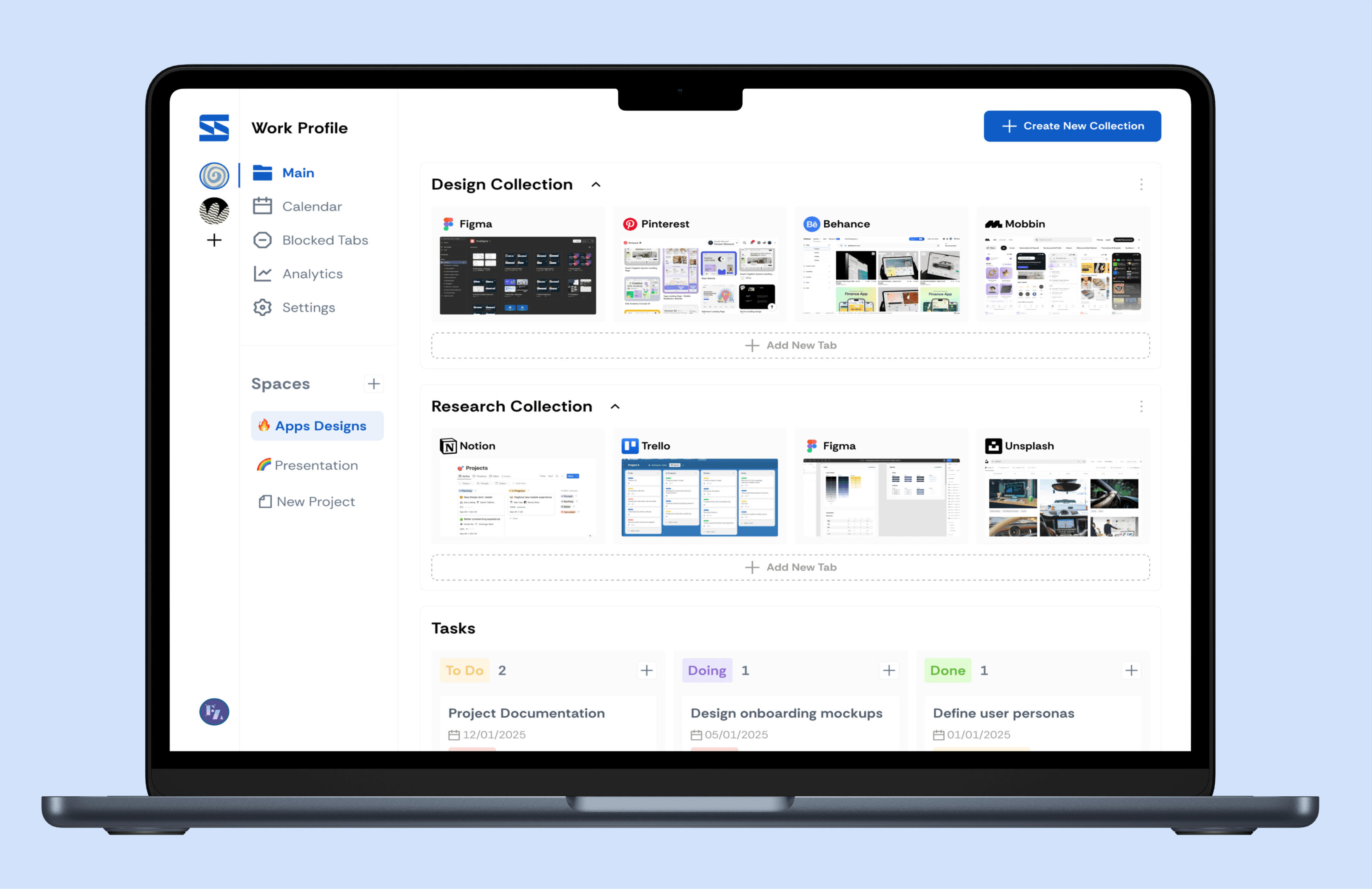

4. Converting Code into Design

To overcome this:

Used the HTML to Design Figma plugin

Converted the live website into Figma frames (Arabic + English)

Outcomes:

Pixel-accurate layouts.

Real content and spacing.

True responsive proportions.

This created a production-aligned starting point inside Figma.

5. Systematizing the Design with AI

With frames in place:

Claude analyzed 2000+ nodes across screens.

Identified colors, typography, and repeated patterns.

Generated and connected local variables programmatically.

Defined missing tokens, including dark mode values.

Identified common UI patterns and converted them into reusable local components (e.g., buttons, tags).

However, plugin-generated variables were not clean:

Inconsistent naming.

Redundant tokens.

Missing semantic structure.

Resolution:

Used Claude to clean and standardize variables.

Established proper naming conventions.

Mapped semantic tokens (surface, text, accent, tags).

Completed missing values.

Refined components to ensure consistency and scalability across screens.

6. Managing AI Limitations

A key observation:

Claude performs poorly in long chat sessions.

Solution:

Broke tasks into smaller, focused prompts.

Reset context frequently.

Treated AI as modular tools rather than a continuous assistant.

3- Conclusion & Lessons Learned

AI tools are powerful but specialized—each tool performed best in a specific role rather than end-to-end.

Designing in code first improved realism, responsiveness, and handling of bilingual layouts.

AI-generated systems require human refinement to achieve clarity, structure, and usability.

Providing structured context (like Figma skills documentation) improves AI reasoning but does not eliminate execution gaps.

Managing prompt size and context is essential for maintaining AI performance and output quality.

AI can accelerate system creation (tokens, components), but design quality still depends on human judgment and system thinking.

4- Next Steps

Refine journaling flows based on user feedback.

Improve accessibility (contrast, typography scaling, readability).

Position the product as an ongoing charity initiative with continuous value.

Optimize AI workflow into a repeatable, documented process.